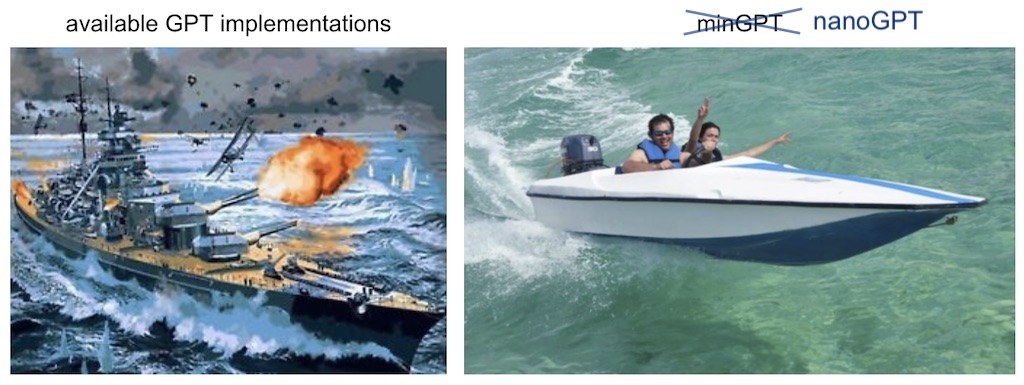

Want to understand how GPT models actually work under the hood? This is your golden ticket. While most AI frameworks hide complexity behind layers of abstraction, nanoGPT gives you the raw mechanics in just two files: train.py (300 lines) and model.py (300 lines). That’s it. No enterprise bloat, no mystery black boxes - just clean, hackable code that reproduces GPT-2 performance.

The numbers speak for themselves: you can train a 124M parameter model that matches OpenAI’s GPT-2 performance, and it comes with everything from Shakespeare character-level training (3 minutes on an A100) to full OpenWebText reproduction. The codebase is so minimal that you can actually read and understand every line, making it perfect for research, experimentation, or learning how transformers really tick. Plus, it loads OpenAI’s pretrained weights out of the box for fine-tuning.

With 53K+ stars and Karpathy’s reputation behind it, this has become the go-to starting point for anyone serious about understanding or customizing GPT architectures. Fair warning: the author now recommends checking out nanochat for newer projects, but nanoGPT remains the clearest window into transformer training you’ll find anywhere.

⭐ Stars: 53129

💻 Language: Python

🔗 Repository: karpathy/nanoGPT