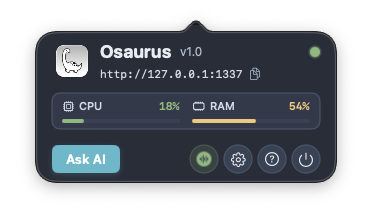

Finally, someone built the AI infrastructure macOS actually needed. Instead of juggling multiple AI services, terminal windows, and API keys, Osaurus gives you one native runtime that handles everything from local MLX inference on Apple Silicon to cloud providers like OpenAI and Anthropic. The killer feature? It speaks Model Context Protocol (MCP), meaning your AI agents can share tools across different apps seamlessly.

This isn’t just another AI chat wrapper. You get drop-in API compatibility (so your existing tools just work), a plugin system for extending functionality, autonomous agents that can handle multi-step tasks, and personas for creating specialized AI assistants. The fact that it’s built in Swift and optimized for Apple Silicon shows they understand the platform. With 3,300+ stars and active development, it’s becoming the de facto AI edge runtime for Mac developers.

Whether you’re building AI-powered apps or just want a better way to run local models without the Python dependency hell, this is worth installing. One brew install --cask osaurus and you’re running Llama models locally while keeping your OpenAI workflows intact.

⭐ Stars: 3346

💻 Language: Swift

🔗 Repository: dinoki-ai/osaurus